- français

- English

Bigdata2015-transaction-manager

PROJECT DESCRIPTION

See Moodle or this PDF document.

ARCHITECTURAL CONSIDERATIONS

We want to build a YARN application performing transactional key-value storage. In other words, it should be able to run transactions in parallel on different node of the cluster. Each of these transactions consists of key-value operations.The key design principle here is locality, i.e.:

- we want keys having something in common (determined by hashing) to be stored as close as possible in the cluster; and

- we want the node accessing the data to have most of the data locally or in neighbouring nodes.

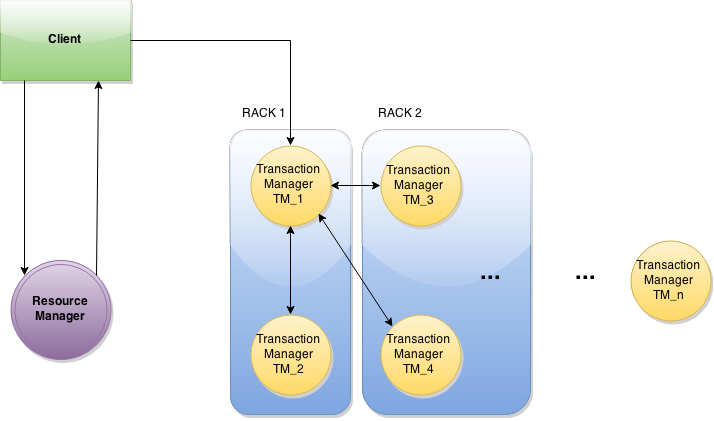

The high-level architecture is illustrated in the schema below. It consists of a client (maybe several), a ResourceManager which has some knowledge of the network, and a bunch of Transaction Managers which runs transactions. We think that each transaction will be given by the user an associated locality hash which determines which Transaction Manager will master the transaction execution (the Resource Manager is aware of which locality hash maps to which Transaction Manager). Then, this would be the responsibility of the master Transaction Manager to communicate with other Transaction Managers if it needs data.

The other key design principle here is the use of hashing. The transaction algorithms (2PL, MVCC) might require exchanges between nodes but we want to keep this number small by having a hash function mapping keys to nodes easily (avoiding indirect exchanges with the Resource Manager to know where the data is).

Transaction manager

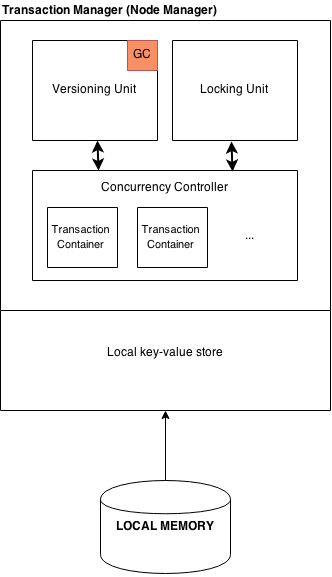

The transaction manager is responsible for a bunch of things:

- Running transactions (in YARN containers?); and

- Dealing with the transactional algorithms (concurrency control, versioning, ...).

SIMPLIFICATIONS

The project might be very ambitious for a 9-weeks project. We therefore consider some simplifications in the design that then can be unsimplified at the end of the project (some of them at least).

Key-values are be stored in non-persistant memory.

The main topic of this project being the transaction manager, not the key-value store, we consider that local in-memory storage is enough.

Dummy machine assignments based on the hash of the key.

At first, we plan to use very simple hard-coded static hashing mechanism even if we hope to come up with a distributed dynamic hashing mechanism by the end of the project.

Transaction Managers do not communicate and no data is exchanged.

The transaction managers only deal with their local data and never access data they don't have locally. This is the simplification that must be unsimplified first.

WORK PACKAGES

Deliverable 1: Feasibility Study to Build on Top of YARN

The main goal of this first stage would be to come up with a design inside YARN to show that the design is indeed possible. This mainly consists in studying YARN and setting up the system with dummy components.

| How do we build these nodes in YARN? | Philémon, Georges |

| Simple 1-day in-memory key-value store implementation | Georges |

| Client-Transaction Manager communication | Ege, Sachin |

| Concrete definition of interfaces of project's modules | Andy, Florian |

Estimated duration: 2 weeks - Deadline: March, 23th

Deliverable 2: Initial Prototype

The goal of this second stage is to implement the key modules with all the above-mentioned simplifications.

| MVTO implementation, versioning subsystem | Florian, Andy |

|

Locking subsystem

|

Georges, Philémon |

| 2PL, MVCC2PL implementation | Sachin, Ege |

| Transaction Manager infrastructure implementation | Georges, Philémon |

Estimated duration: 4 weeks - Deadline: April, 29th

Deliverable 3: Make it work well!

The goal of this last phase is to get rid of the maximum number of simplifications discussed earlier.

| Benchmarking system | Andy, Florian |

|

Network infrastructure needed for distribution |

Georges, Philémon |

| 2-phase commit implementation | Sachin |

| Centralized dead lock prevention | Ege |

| Test framework for concurrent schedule testing | Sachin, Andy & Philémon |

THE TEAM

- Favrod Philémon (team leader),

- Roulin Andy,

- Bagcioglu Egeyar Özlen,

- Depraz Florian Vincent,

- Piskas Georgios, and

- Sachin Basil John.